Doing different activities we often are interesting how they impact each other. For example, if we visit different links on Internet, we might want to know how this action impacts our motivation for doing some specific things. In other words we are interesting in inferring importance of causes for effects from our daily activities data.

In this post we will look at few ways to detect relationships between actions and results using machine learning algorithms and python.

Our data example will be artificial dataset consisting of 2 columns: URL and Y.

URL is our action and we want to know how it impacts on Y. URL can be link0, link1, link2 wich means links visited, and Y can be 0 or 1, 0 means we did not got motivated, and 1 means we got motivated.

The first thing we do hot-encoding link0, link1, link3 in 0,1 and we will get 3 columns as below.

So we have now 3 features, each for each URL. Here is the code how to do hot-encoding to prepare our data for cause and effect analysis.

filename = "C:\\Users\\drm\\data.csv"

dataframe = pandas.read_csv(filename)

dataframe=pandas.get_dummies(dataframe)

cols = dataframe.columns.tolist()

cols.insert(len(dataframe.columns)-1, cols.pop(cols.index('Y')))

dataframe = dataframe.reindex(columns= cols)

print (len(dataframe.columns))

#output

#4

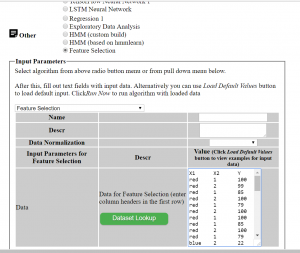

Now we can apply feature extraction algorithm. It allows us select features according to the k highest scores.

# feature extraction

test = SelectKBest(score_func=chi2, k="all")

fit = test.fit(X, Y)

# summarize scores

numpy.set_printoptions(precision=3)

print ("scores:")

print(fit.scores_)

for i in range (len(fit.scores_)):

print ( str(dataframe.columns.values[i]) + " " + str(fit.scores_[i]))

features = fit.transform(X)

print (list(dataframe))

numpy.set_printoptions(threshold=numpy.inf)

scores:

[11.475 0.142 15.527]

URL_link0 11.475409836065575

URL_link1 0.14227166276346598

URL_link2 15.526957539965377

['URL_link0', 'URL_link1', 'URL_link2', 'Y']

Another algorithm that we can use is <strong>ExtraTreesClassifier</strong> from python machine learning library sklearn.

from sklearn.ensemble import ExtraTreesClassifier

from sklearn.feature_selection import SelectFromModel

clf = ExtraTreesClassifier()

clf = clf.fit(X, Y)

print (clf.feature_importances_)

model = SelectFromModel(clf, prefit=True)

X_new = model.transform(X)

print (X_new.shape)

#output

#[0.424 0.041 0.536]

#(150, 2)

The above two machine learning algorithms helped us to estimate the importance of our features (or actions) for our Y variable. In both cases URL_link2 got highest score.

There exist other methods. I would love to hear what methods do you use and for what datasets and/or problems. Also feel free to provide feedback or comments or any questions.

References

1. Feature Selection For Machine Learning in Python

2. sklearn.ensemble.ExtraTreesClassifier

Below is python full source code

# -*- coding: utf-8 -*-

import pandas

import numpy

from sklearn.feature_selection import SelectKBest

from sklearn.feature_selection import chi2

filename = "C:\\Users\\drm\\data.csv"

dataframe = pandas.read_csv(filename)

dataframe=pandas.get_dummies(dataframe)

cols = dataframe.columns.tolist()

cols.insert(len(dataframe.columns)-1, cols.pop(cols.index('Y')))

dataframe = dataframe.reindex(columns= cols)

print (dataframe)

print (len(dataframe.columns))

array = dataframe.values

X = array[:,0:len(dataframe.columns)-1]

Y = array[:,len(dataframe.columns)-1]

print ("--X----")

print (X)

print ("--Y----")

print (Y)

# feature extraction

test = SelectKBest(score_func=chi2, k="all")

fit = test.fit(X, Y)

# summarize scores

numpy.set_printoptions(precision=3)

print ("scores:")

print(fit.scores_)

for i in range (len(fit.scores_)):

print ( str(dataframe.columns.values[i]) + " " + str(fit.scores_[i]))

features = fit.transform(X)

print (list(dataframe))

numpy.set_printoptions(threshold=numpy.inf)

print ("features")

print(features)

from sklearn.ensemble import ExtraTreesClassifier

from sklearn.feature_selection import SelectFromModel

clf = ExtraTreesClassifier()

clf = clf.fit(X, Y)

print ("feature_importances")

print (clf.feature_importances_)

model = SelectFromModel(clf, prefit=True)

X_new = model.transform(X)

print (X_new.shape)

You must be logged in to post a comment.