I often come across web posts about extracting data (data scraping) from websites. For example recently in [1] Scrapy tool was used for web scraping with Python. Once we get scraping data we can use extracted information in many different ways. As computer algorithms evolve and can do more, the number of cases where machine learning is used to get insights from extracted data is increasing. In the case of extracted data from text, exploring commonly co-occurring terms can give useful information.

In this post we will see the example of such usage including computing of correlation.

Our example is taken from [2] where job site was scraped and job descriptions were processed further to extract information about requested skills. The job description text was analyzed to explore commonly co-occurring technology-related terms, focusing on frequent skills required by employers.

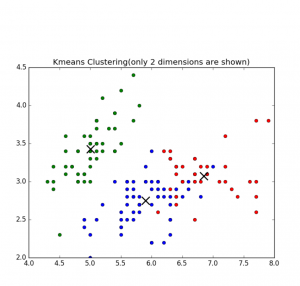

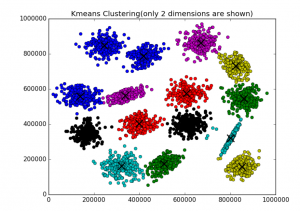

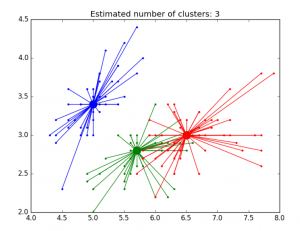

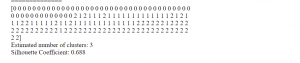

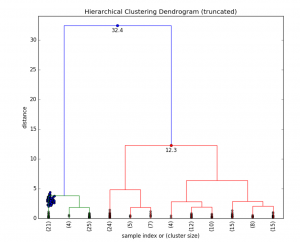

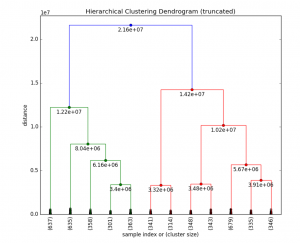

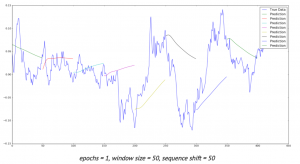

Data visualization also was performed – the graph was created to show connections between different words (skills) for the few most frequent terms. This looks useful as the user can see related skills for the given term which can be not visible from text ads.

The plot was built based on correlations between words in the text, so it is possible also to visualize the strength of connections between words.

Inspired by this example I built the python script that can calculate correlation and does the following:

- Opens csv file with the text data and load data into memory. (job descriptions are only in one column)

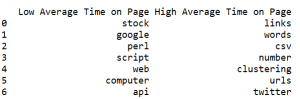

- Counts top N number based on the frequency (N is the number that should be set, for example N=5)

- For each word from the top N words it calculate correlation between this word and all other words.

- The words with correlation more than some threshold (0.4 for example) are saved to array and then printed as pair of words and correlation between them. This is the final output of the script. This result can be used for printing graph of connections between words.

Python function pearsonr was used for calculating correlation. It allows to calculate Pearson correlation coefficient which is a measure of the linear correlation between two variables X and Y. It has a value between +1 and −1, where 1 is total positive linear correlation, 0 is no linear correlation, and −1 is total negative linear correlation. It is widely used in the sciences.[4]

The function pearsonr returns two values: pearson coefficient and the p-value for testing non-correlation. [5]

The script is shown below.

Thus we saw how data scraping can be used together with machine learning to produce meaningful results.

The created script allows to calculate correlation between terms in the corpus that can be used to draw plot of connections between the words like it was done in [2].

See how to do web data scraping here with newspaper python module or with beautifulsoup module

Here you can find how to build graph plot

# -*- coding: utf-8 -*-

import numpy as np

import nltk

import csv

import re

from scipy.stats.stats import pearsonr

def remove_html_tags(text):

"""Remove html tags from a string"""

clean = re.compile('<.*?>')

return re.sub(clean, '', text)

fn="C:\\Users\\Owner\\Desktop\\Scrapping\\datafile.csv"

docs=[]

def load_file(fn):

start=1

file_urls=[]

strtext=""

with open(fn, encoding="utf8" ) as f:

csv_f = csv.reader(f)

for i, row in enumerate(csv_f):

if i >= start :

file_urls.append (row)

strtext=strtext + str(stripNonAlphaNum(row[5]))

docs.append (str(stripNonAlphaNum(row[5])))

return strtext

# Given a text string, remove all non-alphanumeric

# characters (using Unicode definition of alphanumeric).

def stripNonAlphaNum(text):

import re

return re.compile(r'\W+', re.UNICODE).split(text)

txt=load_file(fn)

print (txt)

tokens = nltk.wordpunct_tokenize(str(txt))

my_count = {}

for word in tokens:

try: my_count[word] += 1

except KeyError: my_count[word] = 1

data = []

sortedItems = sorted(my_count , key=my_count.get , reverse = True)

item_count=0

for element in sortedItems :

if (my_count.get(element) > 3):

data.append([element, my_count.get(element)])

item_count=item_count+1

N=5

topN = []

corr_data =[]

for z in range(N):

topN.append (data[z][0])

wcount = [[0 for x in range(500)] for y in range(2000)]

docNumber=0

for doc in docs:

for z in range(item_count):

wcount[docNumber][z] = doc.count (data[z][0])

docNumber=docNumber+1

print ("calc correlation")

for ii in range(N-1):

for z in range(item_count):

r_row, p_value = pearsonr(np.array(wcount)[:, ii], np.array(wcount)[:, z])

print (r_row, p_value)

if r_row > 0.4 and r_row < 1:

corr_data.append ([topN[ii], data[z][0], r_row])

print ("correlation data")

print (corr_data)

References

1. Web Scraping in Python using Scrapy (with multiple examples)

2. What Technology Skills Do Developers Need? A Text Analysis of Job Listings in Library and Information Science (LIS) from Jobs.code4lib.org

3. Scrapy Documentation

4. Pearson correlation coefficient

5. scipy.stats.pearsonr

You must be logged in to post a comment.